Season two of Westworld might have just wrapped, but the conversation about the benefits and perils of creating extremely human-like robots keeps going.

When we think of robots, often we imagine a clunky humanoid made from metals and plastics. Yet the concept that robots might one day reach a point where they are almost indistinguishable from humans is nothing new.

For decades, sci-fi novels, movies like Blade Runner, and TV programs such as Westworld have explored the physical, psychological and ethical challenges of living alongside synthetic, artificially intelligent counterparts that – at least at face value – look, think and feel like us.

Creating the robots of Westworld

Often, the focus has been on characteristics such as intelligence, empathy, the robot’s external appearance and how we interact with them. However, the creators of Westworld have gone a step further, creating internal structures that are very much like our own; the robots or ‘hosts’ can move and communicate almost as seamlessly as we do. They’ve even gone so far as to make it possible for them to eat, sleep and yes, even poop.

For this to happen, the robots would need to have highly advanced synthetic organs that mimic the heart, kidneys and intestines, on top of their CPU ‘brain’, making their design and manufacture a complex process.

In the first season, viewers are given a glimpse of the robots’ evolution from more traditional structures to 3D-printed, hyper-realistic versions containing individual muscle fibres and ligaments.

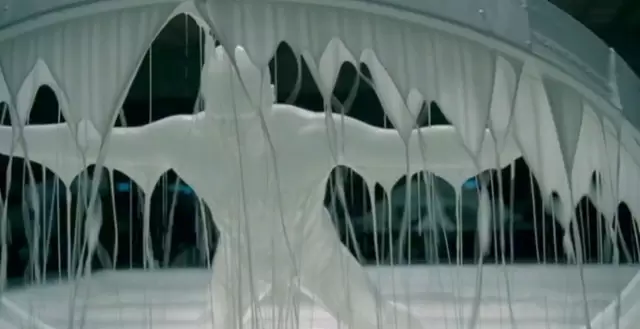

Although these muscle fibres were computer generated, an interview between VFX team lead Jay Worth and Inverse revealed the scenes where bodies were dipped in white liquid to create the skin and fat layers were practical effects made up of glue and water.

Although these muscle fibres were computer generated, an interview between VFX team lead Jay Worth and Inverse revealed the scenes where bodies were dipped in white liquid to create the skin and fat layers were practical effects made up of glue and water.

Worth even considered the technologies that would be required to make the eyes look organic, including the use of pigments and the best way to fabricate the iris.

How far away are Westworld-like robots?

While examples such as Hanson Robotics’ Sophia or Boston Dynamics’ Atlas show our progression towards more life-like robots, we are still a long way from having androids as sophisticated as those in Westworld.

In fact, most researchers are currently moving away from creating life-like robots. In a piece published by WIRED for HBO, UC Berkeley Professor Anca Dragan, founder of the InterACT Lab, explained one of the reasons for this is people are likely to overestimate the capabilities of a robot that looks like a human – capabilities and “expectations we can’t deliver on for a long time”, Dragan said.

Plus, there’s the ‘uncanny valley’ effect. People often feel uncomfortable with robots that look almost like humans, but not exactly. So unless a human-like robot’s mimicry is flawless, it’s unlikely people will take up the idea wholeheartedly.

However, for those who are interested in pursuing Westworld-like robots, ‘The Real Life Westworld’ has a checklist of things you’ll need:

- Wi-Fi capabilities, as AI will likely be processed via the cloud and robots will need to be tracked in real time.

- Ability to realistically assess, identify and interact with their surroundings and other people. Most importantly, they will need to convey empathy and respond appropriately to subtle cues and gestures.

- Ability to process and understand multiple languages and take meaningful direction from conversation.

- Ability to hold natural conversations, including the use of subtle facial expressions to show emotion.

- An internal battery (most likely built into the torso) that could serve their large power requirements, as well as other structures that would help facilitate free movement.

- Human-like grip.

- Smooth and agile movement, particularly in terms of walking and running.

EQ in robots

Progress towards achieving these criteria is slow, but it is happening. Already, AI is becoming inextricably linked to the cloud, since it requires large amounts of high-quality data to learn from and can be prohibitively expensive to develop without it.

Google, Amazon and Microsoft have each contributed their own cloud-based AI platforms, with Google’s TensorFlow being favoured by developers due to its ability to build other machine-learning software.

Meanwhile, Hanson Robotics has their own cloud-based AI that allows them to control their robots and process data at large scales. They are also currently working with SingularityNET to create a decentralised marketplace for AI developers to swap resources and tools for tokens or other services via blockchain technology.

Creating ‘feelings’ of empathy and natural emotions in robots is tough to crack, though. Emotionally intelligent software programs exist, but creating a robot that can hold natural conversations, recognise facial expressions and change its behaviour based on previous interactions is a challenge. Hanson Robotics is working to build software that will give its robots these capabilities, along with using ‘Frubber’ (flesh rubber) skin so robots can make their own facial expressions.

Danielle Krettek, founder and principal of Google Empathy Lab, is also working to develop emotional intelligence in AI, with the hopes of creating more human-like experiences. Although she’s previously stated she doesn’t believe true empathy is possible in machines, she hopes robots can make an “empathetic leap” to become more understanding of the nuances of human emotions to better imitate them.