Picking up an object from a cluttered table is a task humans can do without even thinking. But for robots, that’s a much harder task.

Although technology has come a long way over the past decade, a robot’s ability to grasp an object and adapt to change in an unstructured environment where the position of an object changes still remains elusive.

Roboticists from the Queensland University of Technology (QUT) are now working on this, developing an approach based on a neural network that allows a robot to scan its environment and map each pixel in order to grasp an object.

The new method has been developed by PhD researcher Douglas Morrison, Dr Jurgen Leitner and Distinguished Professor Peter Corke, all from QUT’s Science and Engineering Faculty.

“A very difficult problem in robotic grasping is the ability for a robot to look at an object that it’s never seen before and identify the best way for it to pick the object up,” Morrison said.

“We developed a method that takes a depth image of a scene, then uses a neural network to generate what we call a ‘grasp map’, which is essentially a heat map of good places on the objects to grasp.”

By mapping what is in front of it using a depth image, the robot doesn’t need to sample many different possible grasps before making a decision, avoiding long computing times.

The types of objects the robot can grasp are not limited, as long as they are not too large, heavy or small for a robot, with the team testing a wide variety of objects.

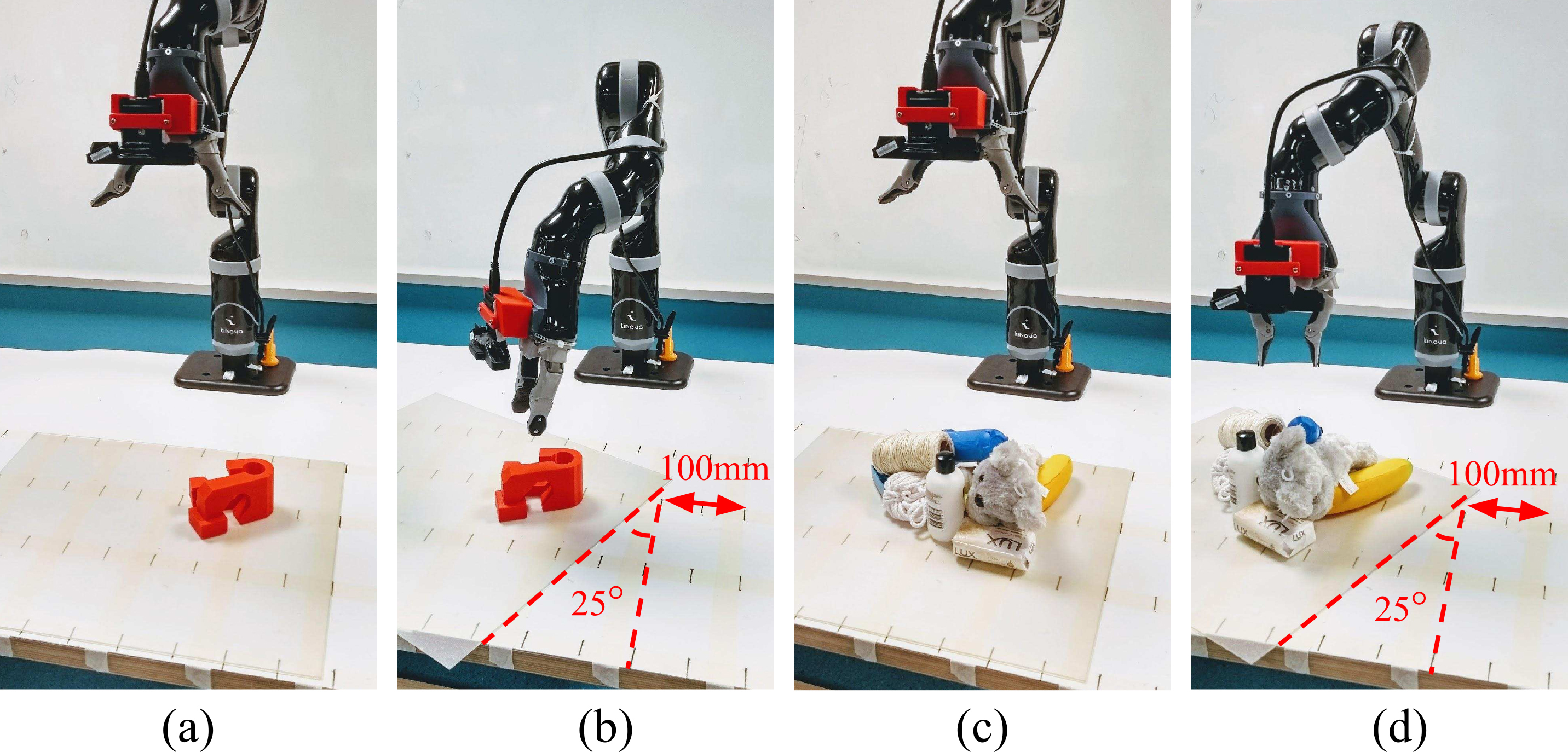

This included 3D-printed objects that are designed to be hard to grasp, as well as a variety of household objects that can typically be found on a desk.

Part of the tests included randomly moving objects around so that the robot had to react, as well as placing objects in a cluttered pile.

Tests showed accuracy rates of up to 88 per cent for dynamic grasping and up to 92 per cent in static environments.

“A lot of the challenges for robotic grasping revolve around perception and control, neither of which are perfect,” Morrison said.

“So we focus on designing a method which is robust to these issues, and we specifically show in our work that our technique is able to handle errors in our robot’s control.”

The way the robot scans an environment is through a depth camera mounted on its ‘wrist’.

Unlike a normal camera that captures images in colour, a depth camera measures the distance from the camera at each pixel.

“Having the camera on its wrist means that it moves with the robot, seeing more of the environment as it moves, rather than looking from a single viewpoint,” Morrison said.

The team then used a neural network they named Generative Grasping Convolutional Neural Network to detect where good areas were on an object to grasp it.

This approach overcomes a number of limitations of current deep-learning grasping techniques such as speed.

“A limitation of a lot of other robotic grasping techniques is their inability to quickly react to change,” Morrison said.

For example, he says they tend to observe objects from a single viewpoint and then blindly attempt to grasp them, meaning that if objects move or an initial estimate wasn’t correct, they might fail.

But in the team’s method, they were able to process the image in 20 ms and re-evaluate where the best place was to grasp the object.

This can be particularly important if objects move or if a robot is grasping an object from a cluttered pile where the entire environment can’t be seen from a single viewpoint.

The team is now looking at a number of ways to improve accuracy, including using trial and error from the robot to keep learning and improving, using more and different data to train the system how to detect grasps, and letting the robot choose better viewpoints for the camera for better images and grasp accuracy.