The idea of building ethical robots might not be impossible, depending on your definitions. Nor is it a trivial issue.

“A way of thinking about our simple ethical robots is that they’re kind of ‘safety plus’,” explained Alan Winfield, professor of electronic engineering at University of the West of England.

Winfield has been at the Bristol Robotics Laboratory (formerly the Intelligent Autonomous Systems Lab, which he co-founded) since 1993, but he’s only recently turned his attention to an important challenge: trying to make ethical robots.

The robots they’re using (Aldebaran’s NAOs) aren’t ‘full ethical agents’, as one might guess. However, progress on the concept has been considered by moral philosophers and a ‘moral health check’ has concluded the robots do indeed possess a form of consequentialist ethics. This is achieved by a ‘consequence engine’ – a robot simulator within a robot. The simulator contains the robot, its environment, and produces a regular prediction set of possible next actions.

“It’s not a new idea, but what we’ve done is we’ve made that work,” he said.

“Only a few people have done it, but we’ve made that work in real-time and hooked it up and we call it a ‘consequence engine’.”

As Winfield and his collaborators have coded it, the robots follow a simple rule:

IF not all robot actions, the human is equally safe

THEN (*default safe actions*)

Output safe robot actions

ELSE (*ethical action*)

Output robot action for least unsafe human outcome

The experiments began in 2014, first with simple, Swiss-made e-pucks, then with NAOs. The ‘person’ would walk toward the danger zone, and – due to its ‘ethical’ programming, the robot would move towards the proxy human and nudge it off-course, avoiding the hole.

A couple of interesting things happened when a second proxy human was added to the scenario, also walking towards danger. Sometimes the robot saved both, but on 14 of 33 rounds, it saved neither, due to dithering. Unlike a person, who might commit to a certain course of action, the robot’s lack of a short-term memory caused it to constantly re-examine the dilemma situation as it unfolded.

“It spent a fair bit of time changing its mind – it doesn’t have a mind, of course, but you know what I mean,” recalled Winfield.

“It took us a while to figure out, but it turned out to be quite simple. Because the robots re-calculate the consequences of the next of these 30-odd actions every half a second, from scratch, then it means that they can change their decision every half a second.”

For whatever the robots’ shortcomings, they arguably showed it was possible to have some degree of ethical agency built in.

An IEEE Intelligent Systems paper on machine ethics by James Moor, which Winfield has cited with approval, identifies four different categories of ethical agency: ethical impact agents (a system that can be evaluated); implicit ethical agents (constrained to avoid unethical outcomes); explicit ethical agents (able to reason about ethics); and full ethical agents (able to justify judgements). It suggests a ‘bright line’ between the last two and, though an AI might never cross that line, Winfield argues his Asimovian example qualifies as an explicit ethical agent.

Autonomous systems

Winfield has been thinking of ethics and robots together for some time. He was part of the UK’s Engineering and Physical Sciences Research Council/Arts and Humanities Research Council workshop drafting a set of Principles of Robotics in 2010. This guides responsible designing, building and usage of robots.

It was only about three or four years ago that he accepted the concept of a robot actually possessing ethics. In his 2012 Short Introduction To Robotics, he dismissed the idea as impossible in principle. (“Embarrassing”, he confessed.) He credits the conversion to a year’s worth of persuasion by a research collaborator, the University of Liverpool’s Professor Michael Fisher.

Winfield didn’t start his academic career thinking about robot ethics, though. He didn’t even start as a roboticist, and finished his PhD in Digital Communications at the University of Hull.

In 1984 he left university, starting a spin-off company commercialising computing architecture, MetaForth Computer Systems. He returned to academia in 1992 as Professor of Electronic Engineering at University of West England, Bristol.

“I’d always been interested and had a boyhood science fiction interest in robotics, but didn’t get to actually realise that ambition until the early 90s,” he said.

Since co-founding the robotics lab with Chris Melhuish and Owen Holland, Winfield has researched multiple robotics genres. This has included AI, swarm and social robotics, and evolutionary robotics.

Alan Winfield speaking at the World Economic Forum. (Photo: World Economic Forum)He also has a keen interest in public engagement (serving as Director of the UWE Science Communication Unit) and listening to and addressing concerns around his field, which is notoriously prone to sensationalist news items.

“One thing that I try and do in my public talks is explain that, actually, real robots in many ways are much more interesting and very different to movie robots,” he explained.

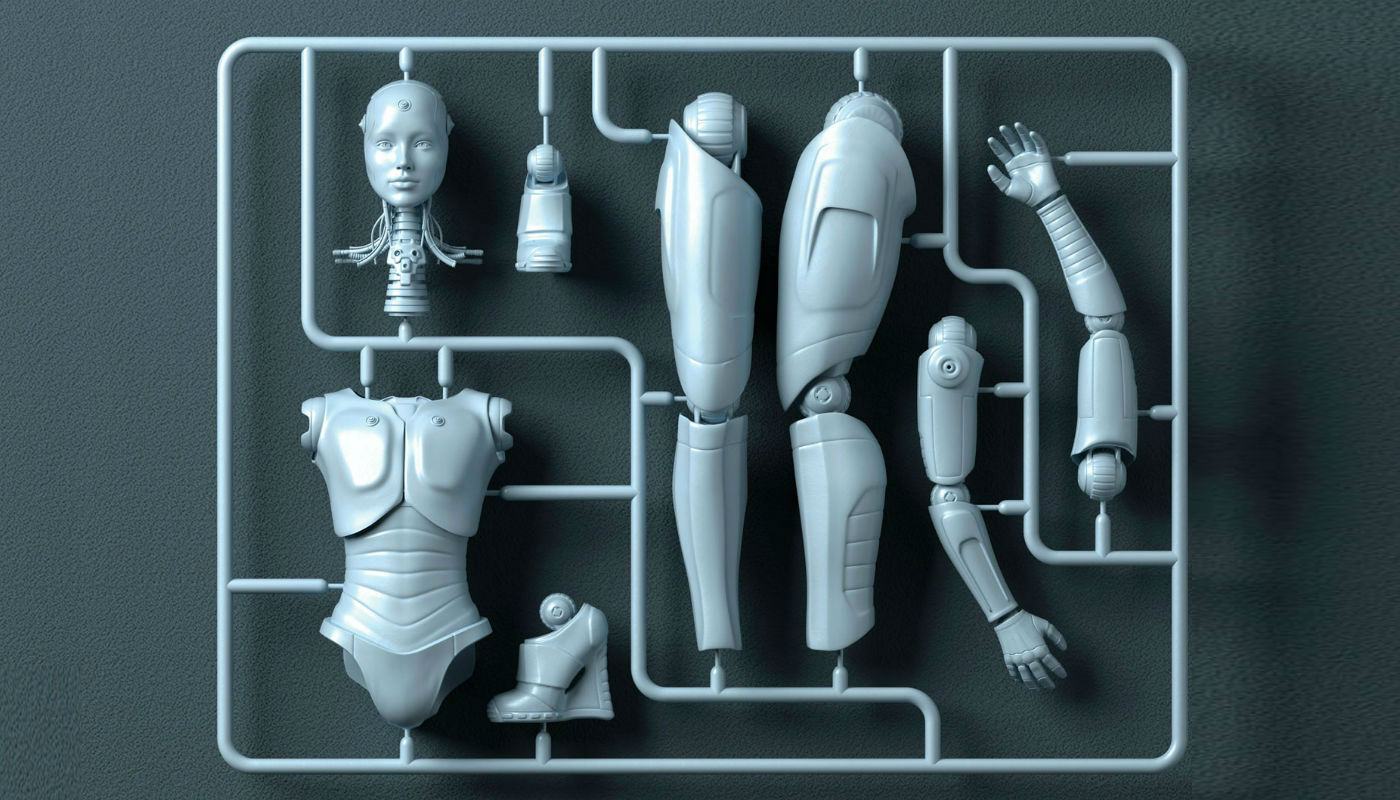

He points to what he calls a “brain/body mismatch” problem. Though we can build high-fidelity, lifelike, humanoid robots – the kind favoured by Hollywood – these do not have intelligence that matches their appearance (“a real ethical problem”).

Not surprisingly, he is also regularly asked about human-level AI, and believes this is something “hundreds of years” off, if it’s ever seen. Concerns need to be taken seriously, though.

“It’s really important that society as a whole should decide on the kind of robotics that we want in our lives and in our society, and perhaps, importantly, the kind of robotics that we don’t want,” he said.

Robo-ethics become important

The days of mainstream autonomous cars are approaching. Carnegie Mellon University Dean of Computer Science Andrew Moore – who, like Winfield, was invited to address this year’s World Economic Forum on intelligent machines – points out that difficult ethical issues are raised for programming such vehicles, and the public needs to be included in the conversation.

For the issue of an animal on the road, for example, a vehicle must ‘decide’ to drive on and kill the animal (1 in 1,000,000 chance of hurting the driver, while killing the animal) or swerve to avoid it (1 in 100,000 chance of hurting the driver, while saving the animal).

“[And] someone has to write that number: how many animals is one human life worth? Is it a thousand, a million or a billion?” Moore asked the Davos audience. Roboticists confess such issues aren’t easily solved. Nor are they trivial. As AI proliferates, the idea of a “consequence engine” can only gain in consequence.

What not to build

Okay, so we know a bit more about what to aim for when building ethical robots. But what are the big no-nos?

Winfield has some strong feelings about building certain kinds of machines.

“I get very angry, I have to say, with research colleagues who work on android robots,” he said.

It comes down to the brain/body mismatch issue. The idea of a robot that can think like a human is a deception. The EPRSC/AHRC principles (bit.ly/ethicalrobots) he helped write, argue that nothing designed should be able to dupe a person, and a high-fidelity android does that.

Basically the packaging shouldn’t make a promise that the intelligence can’t fulfil. A two-way intellectual or emotional engagement with a robot is impossible, and there should be no possibility of a user expecting this.

“The point is that when you see a robot vacuum cleaner, a round thing that runs around on the floor, you’re not going to assume you can have philosophical conversations with that vacuum cleaner,” Winfield said.

The possibility of deceiving a user can increase for vulnerable groups such as the disabled, children, and the elderly. This can happen through appearing to possess feelings or volition. As the principles point out: ‘Robots are manufactured artefacts: the illusion of emotions and intent should not be used to exploit vulnerable users.’

Humanoid robots aren’t a problem, per se, but Winfield favours the ones with non-gendered voices (he believes gendered robots are unethical) and that are cartoonish in nature.

“The important ethical principle, as far as I’m concerned, is that you and I know that it’s an AI,” he said.

“It should be always advertised as an AI. So the machine nature, if you like, of the thing you’re interacting with should be completely transparent and evident.”