Curtin University engineer Andrew Woods delights in using data analysis technology to visualise everything from ocean depths to distant planets.

In an unassuming section of Curtin University’s Bentley Campus, a team of engineers is poring over reams of data, interfaces and equipment that look more at home in a science fiction movie than a former art gallery space.

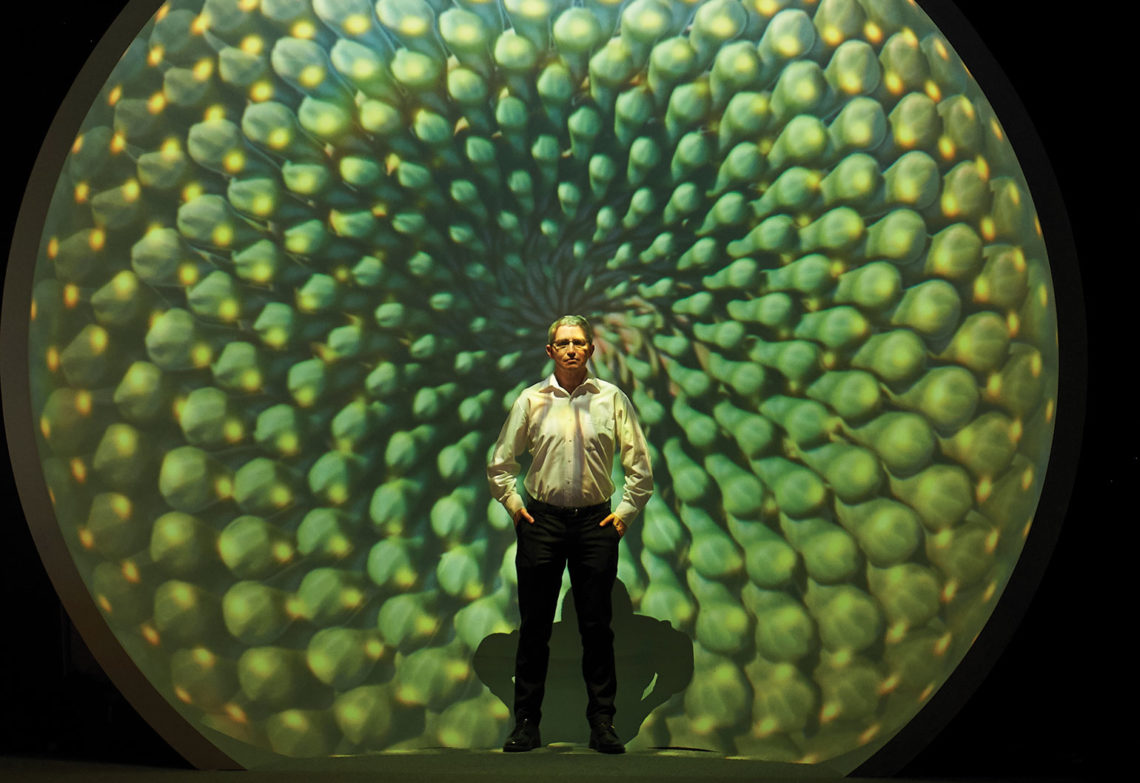

The work underpins the main purpose of The Curtin HIVE, or Hub for Immersive Visualisation and eResearch, which supports all forms of research via visualisation and allied technologies such as virtual reality and augmented reality.

HIVE Manager Dr Andrew Woods is an Engineers Australia Member and a Fellow of the International Society for Optical Engineering. He and his team have acted as the common thread for more than 200 research projects from wide-ranging disciplines across science and engineering, health sciences, humanities and business.

One of the largest projects supported by the HIVE, the Sydney-Kormoran Project, was born out of a conversation between Woods and colleague Dr Andrew Hutchison over a coffee in 2011.

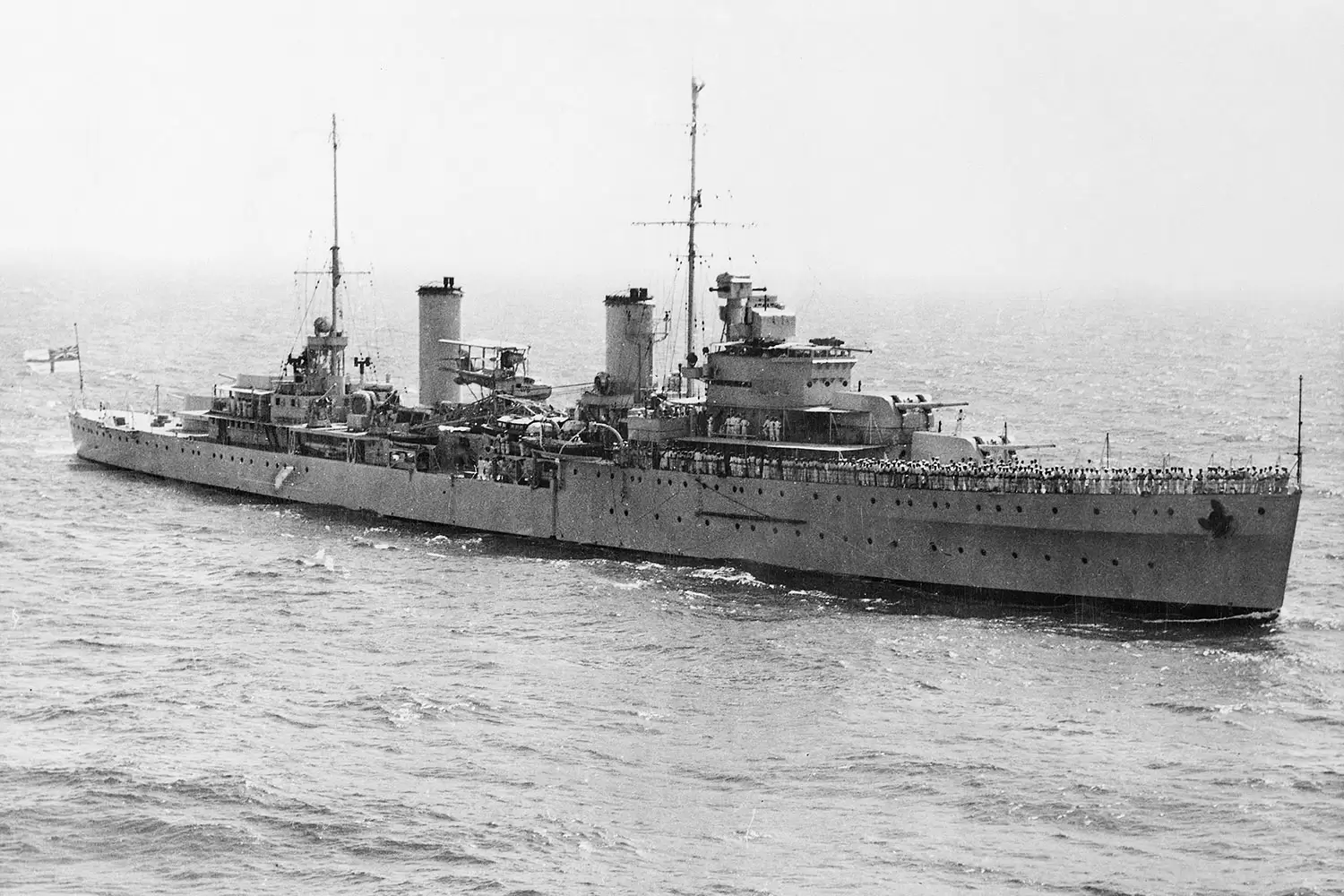

The two discussed interpreting and exhibiting footage of two shipwrecks: the HMAS Sydney (II) and the German auxiliary cruiser that sunk it, the HSK Kormoran.

The HIVE evolved in tandem with the Sydney-Kormoran Project, for which Woods and his team used the HIVE to create and visualise 3D reconstructions of the shipwrecks.

Lost in 1941 during World War II, the fate of HMAS Sydney (II) was Australia’s biggest wartime mystery.

The ship had disappeared without a trace until its wreck was discovered in 2008, roughly 200 km due west of Shark Bay, sitting at 2500 m water depth.

The initial team found the wrecks using deep-tow side-scan sonar — the same technique used to try to locate Malaysian Airlines Flight MH370, which went missing in 2014. They then used a remotely operated vehicle (ROV) to collect 1500 photos and 40 hours of video.

Woods said that the 2008 footage just scraped the surface. It kick-started a process culminating in an expedition team from Curtin University, the Western Australian Museum and DOF Subsea that comprehensively surveyed the wrecks in 2015.

In order to capture the best possible footage in an environment devoid of natural light, Woods led the development of the most complex lighting and camera system that has ever been deployed in deep water in Australia.

They customised two industry ROVs with aluminium frames, hydraulic rams, cameras and two arrays of LED lights rated to 6000 m water depth, each consuming 3 kW of power, to produce 200,000 lumens of light, equivalent to 250 home lightbulbs.

On each ROV were mounted two high-definition 3D video cameras on a pan and tilt unit. There were seven digital still cameras per vehicle with each capturing a photo every five seconds.

Six cameras were equipped with Ethernet interfacing to the surface, enabling the research team to study the subsea imagery in real time and perform quality assurance checks.

By the end of the 2015 expedition, the team had collected more than half a million images and more than 300 hours of video, some of which has already been used to produce two 3D short films, which are screening at the Museum of Geraldton, the Shark Bay World Heritage Discovery Centre, and the Australian National Maritime Museum in Sydney.

Processing power

Woods said the team encountered difficulties processing the imagery due to the sheer size of the dataset.

“Despite the technique of generating 3D models from a series of photographs being very widely used, most of the commercially available software packages max out at around 10,000 images,” he said.

“With half a million photos to process, using traditional techniques, it would take us 1000 years.”

The team is writing its own codebase to run at the nearby Pawsey Supercomputing Centre and has already used four million core hours of processing power.

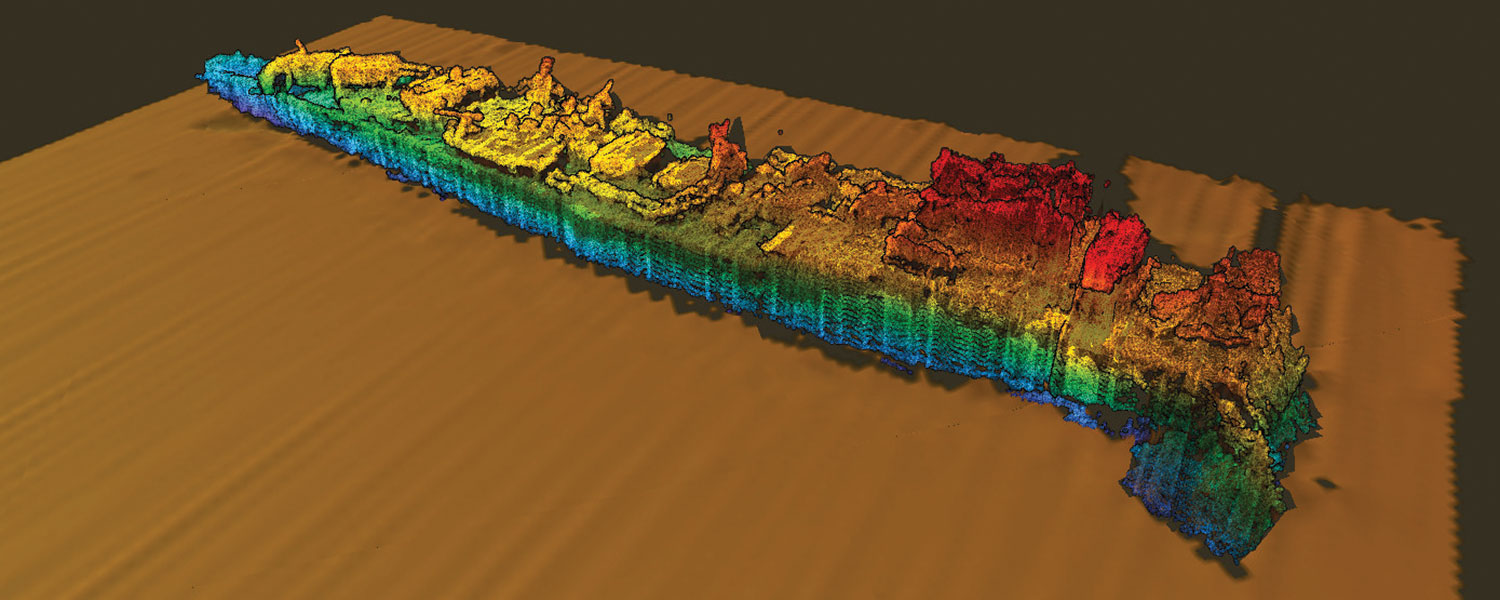

Woods said that he is still some years away from creating a fully-detailed 3D render of these wrecks, which is being generated using a photogrammetric 3D-reconstruction technique.

The technique compares each image with every other image captured in 2015 by performing a feature-matching process that searches for similar edges and textures and tries to mathematically match common points from image to image.

“Our program identifies 10,000 to 20,000 individual features per image, which are matched to corresponding features in other images,” he said.

“Once the images are feature-matched they go through a bundle adjustment process to determine camera position, camera orientation and lens focal length.”

The process involves projecting the feature-matched points into 3D space, which produces a point cloud.

A mesh is then laid across the surface of the point cloud, and, finally, the original images are reprojected onto the mesh to produce a photo-realistic digital 3D model of the original object.

“We are essentially shrink-wrapping a virtual model of the ships so that eventually we will have a very accurate 3D shape with a photographically realistic surface,” Woods said.

The imagery obtained in 2008 corroborated the HSK Kormoran crew survivor accounts, which said that the Sydney (II) sustained significant damage to the bridge early on in the confrontation, while multiple gun turrets suffered catastrophic damage.

Similarly, the 2015 processed data shows roughly 40 per cent of the Kormoran remained intact after the crew was forced to scuttle the ship due to fires started by shelling from the Sydney (II).

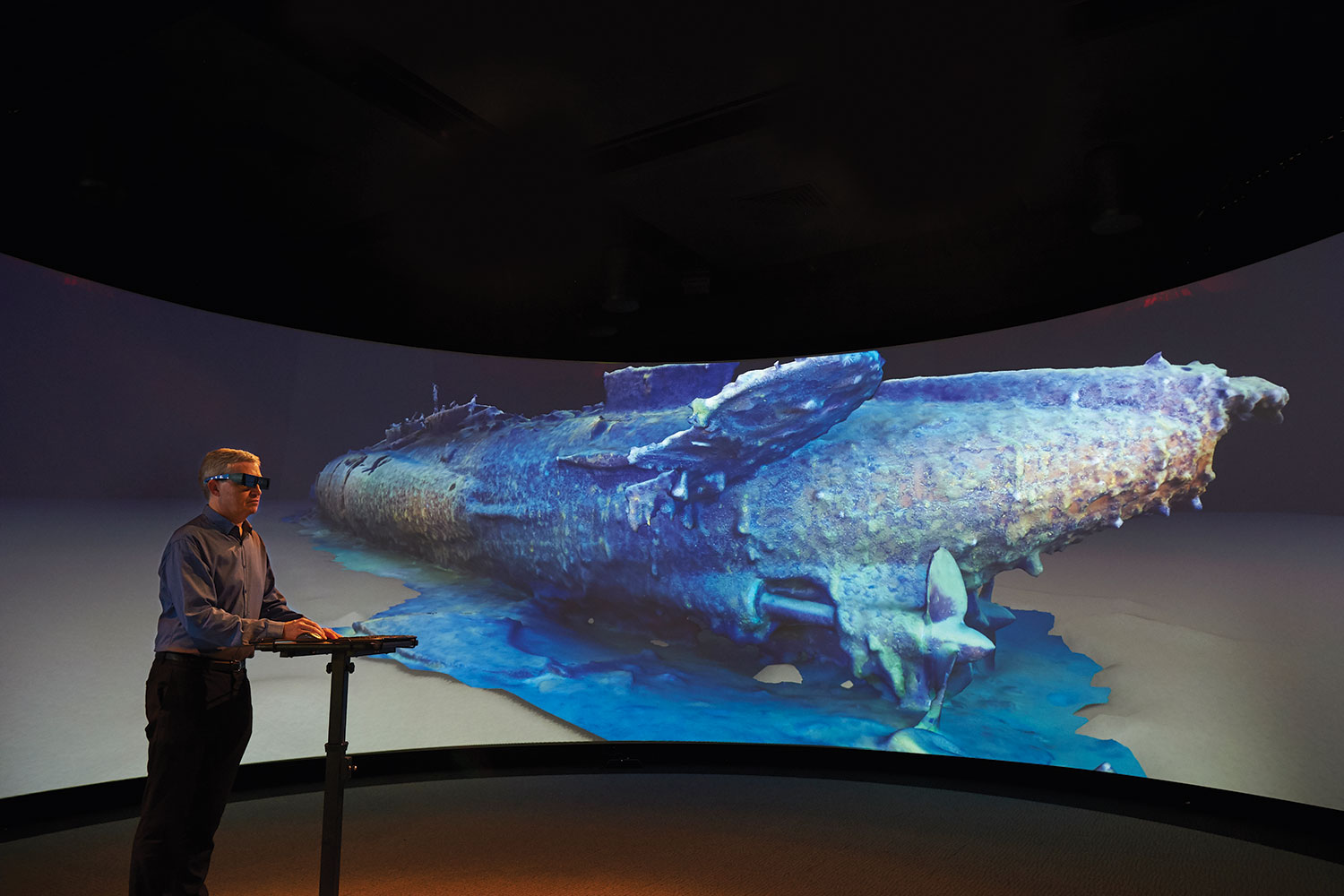

More recently, Woods has applied similar techniques to those used in the Sydney-Kormoran Project to reconstruct the wreck of Australia’s first submarine, HMAS AE1, which was lost at sea off Papua New Guinea in 1914.

Reaching 55 m in length and with a simpler superstructure, AE1 was documented with just 8000 images, which were processed on conventional computers.

Woods presents the AE1 reconstruction on The Cylinder, the HIVE’s 180-degree projection surface, and said AE1 seems to have been lost from a diving accident, with one of the ventilation valves near the conning tower being found in the 60 per cent open position.

“The hydroplanes, which control elevation, were in the hard-to-rise position, so they would have been trying to return to the surface, but the water gushing in would have flooded the batteries and disabled the electrics, so there would have been no thrust.

“A realistic reconstruction of the AE1 allows us to visually present the wreck to visitors as it looks now after being on the sea floor for over 100 years. It also allows maritime archaeologists to virtually examine the wreck.”

Computing power

The work on HIVE’s Sydney-Kormoran Project is made possible in part due to the processing power of the Tier-1 HPC Magnus at the Pawsey Supercomputing Centre.

Pawsey Centre Head of Supercomputing David Schibeci said the virtual archaeology aspect of the work was in line with the out-of-the-box style of projects that the Centre likes to support.

He said Magnus processed the initial dataset in six months, rather than the 17 years it would have taken on conventional computers.

He attributes this to Magnus’s high-speed, low latency interconnect that allows the individual nodes to talk together very quickly — the main point of difference to conventional computers.

Schibeci said that across the range of projects the centre supports, they are seeing a shift in data-from-science to science-from-data.

“Rather than running a simulation which produces a mountain of data, researchers are trying to mine the data they have available to help understand the world around us,” he said.

Range of motion

More recently, the HIVE team has been collaborating with academics from Curtin’s Faculty of Health Sciences to assist a patient with quadriplegia regain movement.

In May 2016, Gary Barnes suffered a serious workplace accident that severely damaged his spinal cord and left him with only limited movement and control in his arms and legs.

In mid-2018, Associate Professor Marina Ciccarelli, an occupational therapist, and Dr Susan Morris, a physiotherapist, planned a virtual reality intervention to improve Barnes’s upper limb function and help him become more independent in activities of daily living.

To fulfil Barnes’s goal of cooking a steak on the barbecue, Curtin HIVE computer scientist Michael Wiebrands developed a virtual reality cooking simulation in a platform called Unity.

The simulation is displayed on an HTC Vive headset, and handheld controllers are attached to Barnes’s hands. It is also projected on the HIVE Wedge display, letting Ciccarelli and Morris see Barnes’s performance in his virtual world, while assessing his real-world actions.

Woods said the handheld controllers provide highly accurate information about where Barnes’s arms are positioned and their range of motion.

“We altered the controllers so that Gary’s movements in the real world are amplified in the simulation,” Woods said.

“He only has a small range of motion now and, in the simulation, it looks like he is doing big motions. He has to reach over, pick up raw steak and season it, and then cook it on the hot plate — turning it at the right time to ensure it is cooked perfectly.”

“Virtual reality is immersive and imaginative, which allows us to provide a motivating and low-risk rehabilitation environment,” Morris said.

“Simulations can be developed and tested in the HIVE, and then the VR system and software can be easily used in the home environment. Gary uses the simulation four days a week at home in addition to the other physical and occupational therapy treatment he receives.”

Ciccarelli agreed.

“Rehabilitation in the 21st century relies on a close partnership between people living with disability, therapists who work with them to regain independence, and engineers who have the technology solutions,” she said.

“This project at the HIVE is a great example of the successful collaboration between different disciplines at the university.”

Cosmic encounters

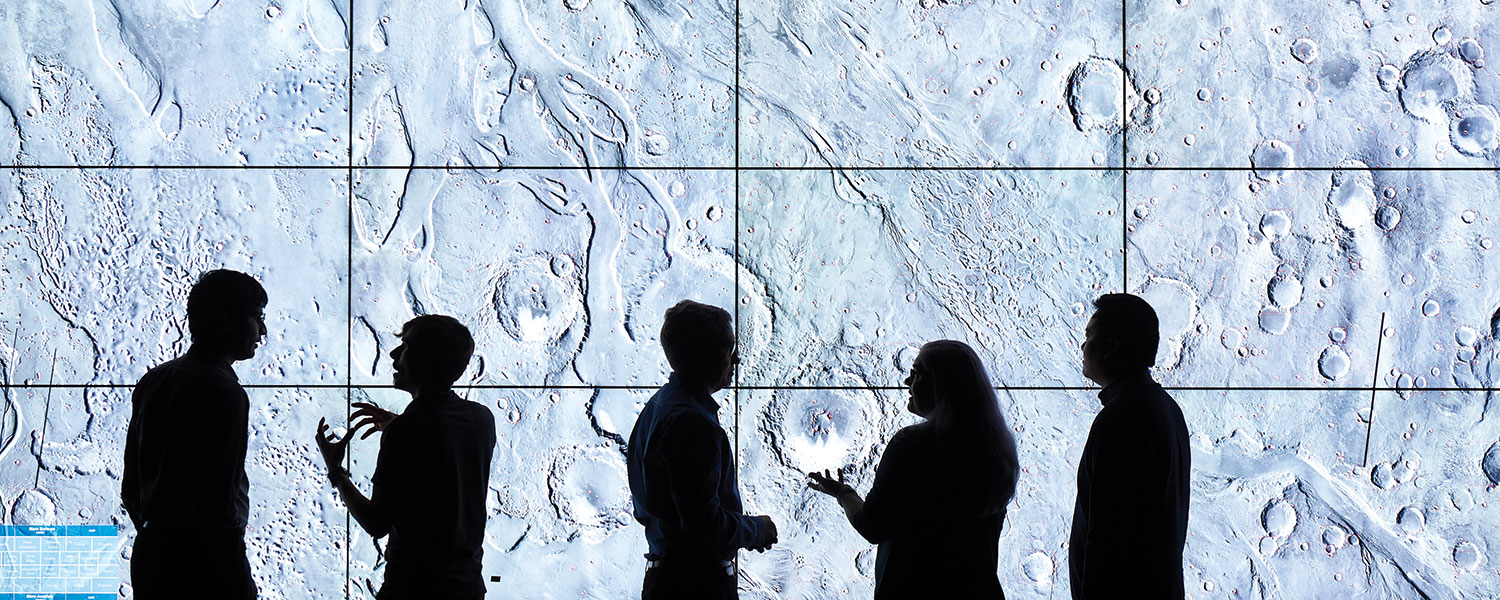

At the time of create’s visit to HIVE, the HIVE Tiled Display showed impact craters on the surface of Mars in clear detail.

“Normally, screens of this size do not drive each pixel individually,” Woods said.

“They usually have a single HDMI input which gets daisy-chained across each individual screen and each monitor picks out a corner of the image to display.

“In this case we have configured the system so that each pixel is controlled individually, so that researchers can see the big picture in incredible detail and you can also zoom in and see a tiny detail in crystal clear quality as well.”

This particular project is a collaboration between Professor Gretchen Benedix from Curtin’s Planetary Science Group, the Curtin Institute for Computation (CIC) and HIVE.

Each impact crater is outlined with a red square, which is the result of a special CIC-designed machine-learning algorithm that automatically identifies and classifies the craters based on the sensor data.

Woods said the CIC team set up a neural network and provided a training dataset so that the algorithm could learn the characteristics of what is and what is not a crater.

“It is important to identify and count the craters as a way to date Mars’ surface, which has altered over time through volcanic activity or by the presence of liquid water, which at one point may have been on the surface,” he said.

“This sort of surface activity determines which meteorite craters will and won’t be visible, so based on the density of meteorite impacts, you can roughly determine the age of the surface and when it was last resurfaced.”

HIVE’s latest display system, the Hologram Table, rear-projects a 3D image from beneath the table surface. This enables users to move around the table, seeing an object from different angles as though it were a hologram.

Tracking cameras follow two pairs of 3D glasses, showing the content with geometry specific to where the users are located.

On the day of create’s visit, the table is showing topographical data derived from a spacecraft orbiting Mars, illustrating the landing site of the next NASA Mars Rover, which will launch in 2020 and arrive at Mars in 2021.

This article originally appeared as “Seeing clearly” in the September 2019 issue of create magazine.