Skin cancer accounts for one of the largest number of cancer diagnoses in Australia each year.

Cancer Council Australia said an estimated two in three people will be diagnosed with the disease by the time they’re 70.

One of the key methods of checking for skin cancer is through self-examination, which can include checking for new spots or a change in the shape or colour of existing spots.

However, self-examinations can be subjective and bothersome, so new technology from a team being led by Dr Anders Eriksson, Future Fellow from the Queensland University of Technology (QUT) School of Electrical Engineering and Computer Science, could make it easier for people to detect skin cancer at an early stage.

The world-first technology aims to use a number of recent advances in imaging technology, computer vision and machine learning to automate skin self-examinations with artificial intelligence (AI).

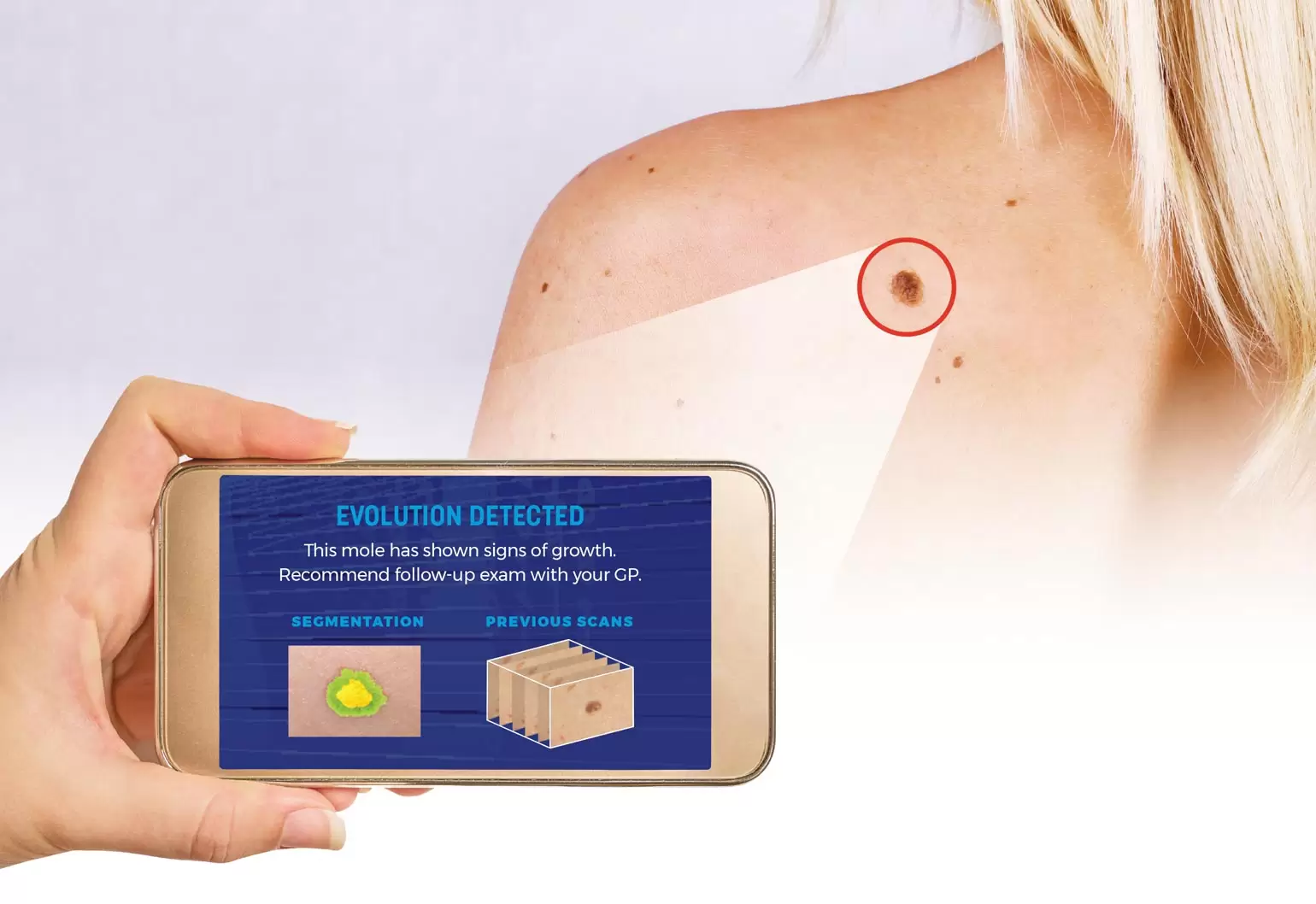

This would allow people to carry out a full-body skin mapping scan of their moles and lesions in the comfort of their home via a smartphone or camera.

The research team is using machine learning in two different ways: to allow people to use their smartphones to carry out a full-body scan without the need for any form of training, and to help detect suspicious moles that might need further clinical analysis.

“The main advantage of our approach is a unified architecture that will facilitate the model estimation procedure, and a unique ability to capture hidden interactions among the different features,” Eriksson said.

“We expect our approach to achieve a sensitivity and accuracy of early skin cancer diagnosis close to that of a trained operator.”

While the system will be able to detect melanomas at any stage, the team has developed it to focus on early detection, as mortality rates associated with skin cancer are directly related to how long it has been growing unnoticed.

“In particular, we will focus on utilising image sequences obtained using standard consumer handheld devices, such as smartphones or digital cameras, to maintain affordability,” Eriksson said.

The technology would work by having a user scan their body with their smartphone to take a large number of high-resolution images. These images would then be sent to a remote server for processing.

Minutes later, a complete 3D reconstruction of their body would be returned.

This would include an identification, analysis and assessment of every mole and lesion, which would be compared with previous scans to evaluate any changes in appearance and automatically flag discoveries that need further clinical analysis.

In order to detect changes in moles and lesions, the team has trained its network using a large number of images of moles that have been hand-labelled and often verified through biopsies.

“The main challenge related to data collection is obtaining labels on the data. There are vast amounts of images of moles available out there, but most of them do not have a label,” Eriksson said.

“Typically, the labelling we have was based on expert opinion, including dermatologists and dermatopathologists.”

The project is still in the early stages, and Eriksson, who has been researching AI, machine learning, and computer vision for more than 15 years, said there is no data on accuracy yet.

“However, work at Stanford University has shown that AI can perform just as well — and often even better than — trained dermatologists when it comes to diagnosing skin cancer from images,” he said.

Although the research is still in the development stages, Eriksson anticipates the technology will be ready in the next three years.

“All the technologies that our algorithms build on are well understood and quite mature. In addition, there is no need for real-time results using this app,” Eriksson said.

“A waiting time of five to 10 minutes, or even more, for the result is not really an issue. Therefore, most of the heavy computations can be done on a remote server.”

The project is being funded by the Merchant Charity Foundation and is a collaboration with dermatology and skin cancer research experts Professor Peter Soyer and Professor Monika Janda from the University of Queensland.

Sharper image

In addition to testing his skin cancer identification technology for accuracy, Eriksson said it will also be important to address issues such as image quality, which can significantly affect accuracy.

For example, lighting, shadows and camera shake can all affect image quality.

Eriksson said ease-of-use and reliability, along with accuracy, also require a high level of expertise in computer vision, mathematical modelling, machine learning and distributed computing, which will be tasks that need to be overcome.

“Also, since this is a project with a medical application, there might be ethical, legal and/or privacy issues that need to be resolved,” he said.

This article originally appeared as “Spot the difference” in the May 2019 issue of create magazine.