Researchers have found that sounds can help robots differentiate between objects and improve perception.

In the rapidly developing field of robotics, machines have generally relied on sight, and increasingly touch, for their sensory perception. But groundbreaking research by a team at Carnegie Mellon University (CMU) in the United States has found that adding hearing to the mix could be the next great leap forward.

“The goal of this project was to study and understand the role sound can play in robotics,” said project researcher Dr Lerrel Pinto, who recently joined New York University as an assistant professor.

Preliminary research in other fields indicated that sound could be useful, but Pinto said it wasn’t clear just how much use it could be in robotics.

To test the theory, Pinto and his colleagues at CMU’s Robotics Institute undertook what they described as the first large-scale study of its kind into the interactions between sound and robotic action.

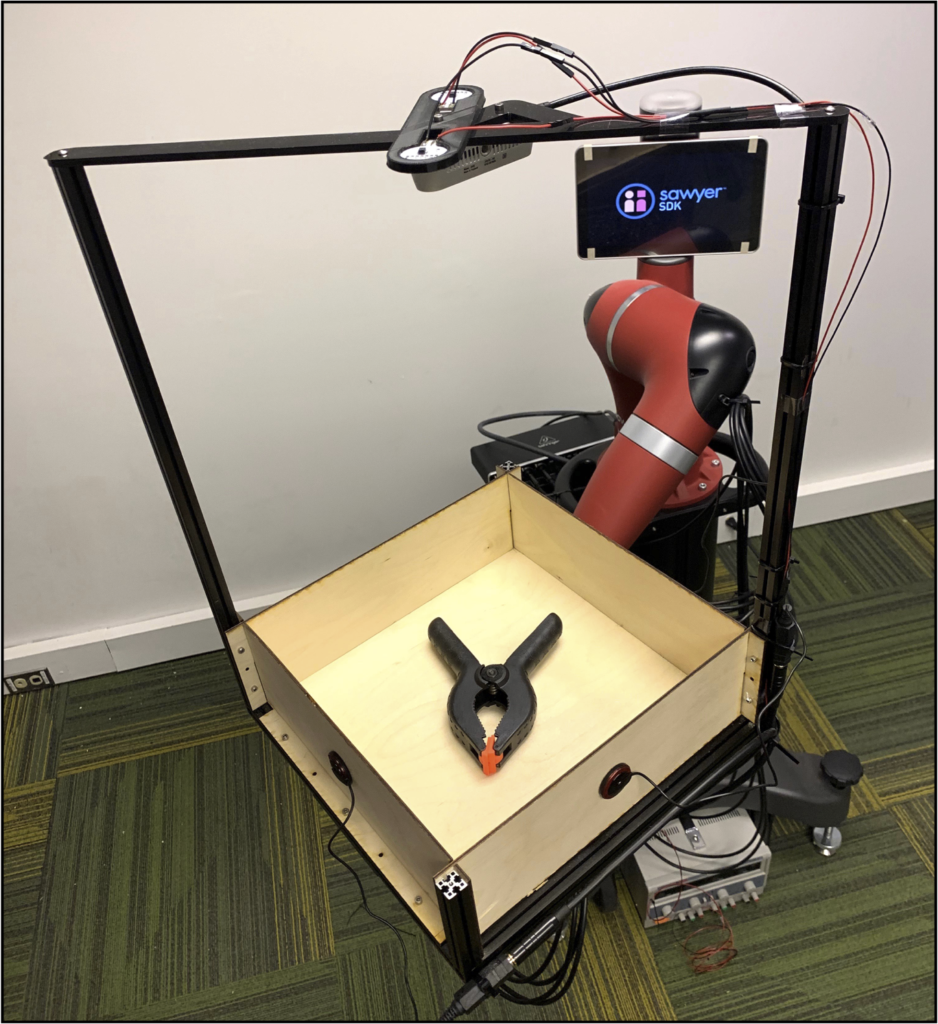

“To do this, we created a robot called Tilt-Bot, which tilts objects in a tray until they hit the side of that tray,” Pinto explained.

“The sound created by the object striking the side of the tray is recorded using contact microphones.”

The Tilt-Bot consists of a Sawyer robot with a tray attached, which the team put different items into. The research drew on technology from a number of fields, including computer vision, machine learning, robotics and learning with audio.

Pinto and his fellow researchers were highly encouraged by the results.

“Our key findings were that sounds are really helpful in understanding what object is creating the sound, what action was applied on the object, and what are the physical properties of this object,” he said.

“One particularly fascinating result was that just by the sound of an object, our models were able to correctly recognise the object between 76% and 78% of the time, while random prediction would be accurate around 2% of the time.”

Pinto said the research shows that sound provides information that can improve a robot’s understanding of objects, including applying what it learned about the sound of one set of objects to make predictions about the physical properties of previously unseen items.

“This is valuable in settings where other sensor modalities like vision or touch cannot provide information,” he said.

“One such situation is when manipulating objects in low-light or with other objects obstructing the robot’s view.”

The researchers also found that the robots’ failures to detect certain sounds were fairly predictable, such as not being able to use sound to tell the difference between a red block or a green block.

“But if it was a different object, such as a block versus a cup, it could figure that out,” Pinto said.

In the process of undertaking the study, the Robotics Institute recorded video and audio of 60 common objects, such as shoes, apples and tennis balls, creating a large dataset cataloging some 15,000 interactions.

The dataset has recently been released to the public, and Pinto is excited to see how other researchers can use the data.

“Currently, our focus has been on showing what sort of information we can give a robot,” he said.

“The next step is to use this information to allow robots to make better decisions when manipulating objects.”