In the global race to build a quantum computer, Australia is well positioned – and Professor Andrew Dzurak from the University of NSW one of the leading contenders.

Professor Andrew Dzurak from UNSW.Silicon-based computing touches seemingly everything we do. The next revolution in computing could therefore be profound, and – if Australia’s leaders in the world of quantum computing have their way – it, too, will be silicon-based.

University of NSW Professor in Nanoelectronics Andrew Dzurak returned from Cambridge in 1994. Since then, he has been part of UNSW’s emergence as a quantum leader, and was involved in the earliest work in silicon.

He credits Australia’s strong position in the global race in quantum computing to two things: the vision of Professor Bob Clark, who also established the Special Research Centre for Quantum Computer Technology in 2000, and Bruce Kane’s seminal 1998 paper in Nature.

It is “impossible to overstate” the impact of Kane’s proposal, the first suggesting individual phosphorous atoms as qubits in silicon.

The idea of phosphorous atoms-in-silicon as qubits was seen as clever, but impossible with the technology of the time. However, the university and its collaborators have crept closer to making it happen. The focus on a Kane computer is well-known internationally, and was described as “almost an obsession in Australia” by MIT Technology Review a few years ago.

UNSW’s single phosphorous atom computing effort continues, led by Professor Michelle Simmons, director of the Centre of Excellence for Quantum Computation and Communication. The university also pursues other different silicon quantum computing models.

“We have essentially three different ways to make qubits,” Professor Andrea Morello told create, adding that “from a helicopter view” they are almost the same.

“They are all quite complementary to each other, so there is no point for any of us at this stage picking winners or predicting winners.”

The appeal of qubit-based computing, if it can be managed, is related to the parallelism as qubits increase. The popular example is having 300 qubits being able to represent as many different states as there are atoms in the universe.

However, maintaining quantum effects such as entanglement is diabolically tricky, and a superposition can be decohered (scrambled) very easily – even by just observing it – and quickly convert back into a zero or one.

For this reason, efforts typically involve liquid helium cryogenics – cooling systems to near-absolute zero – as even thermal vibrations can destroy a quantum state.

Many exotic approaches are also used to make qubits – for example, anyons, photons and nitrogen vacancy centres in diamonds. However, UNSW is leading the way in silicon, and its researchers believe that the manufacturing history of the material offers massive scalability advantages.

World-first result

Last October, a group led by Dzurak published a world-first result in Nature: a two-qubit logic gate in silicon, performing a simple CNOT operation, and created using several fabrication methods and materials common to the electronics industry.

The aluminium MOSFET gates, at 30 nanometres, weren’t even particularly small compared to what’s seen on current chips.

Taking about 40 or 50 steps starting from the bare silicon, the devices were assembled using many methods familiar to those involved in electronics: such as electron beam lithography, PMMA resist, photomasking, and doping in a silicon furnace.

“What we’ve done is we’ve reduced the number of electrons in that transistor down to just one electron,” explained Dzurak.

The spin (and the associated magnetic moment) of each electron encodes the information (up or down represents zero or one) and is manipulated by an oscillating magnetic field. Electrons are positioned neatly at an interface between silicon and silicon dioxide. The state of the spin is read by a single electron transistor on the chip, which has four layers of gate electrodes.

The entire chip was made at the Australian National Fabrication Facility (ANFF) NSW node at UNSW’s Kensington campus. ANFF was established under the Federal Government’s National Collaborative Research Infrastructure Strategy.

“The final thing we do is we connect the chip into a measurement package that can then be plugged into a circuit board, much like you have a motherboard on your computer,” added Dzurak.

It is more simple than some other quantum devices, but controlling anything at a sub-atomic state comes with many challenges.

Silicon wafers for a quantum computer at the Australian National Fabrication Facility.“I could spend hours talking through some very technical matters related to the accuracy of the readout, accuracy of control etcetera, which are important to get the error rates down,” he said.

“Suffice to say that there remain many physics and engineering challenges in the readout and the control of the qubits, largely related to ensuring that the error rates are low.”

These qubits must also be read in a closed-cycle liquefied helium refrigerator operating below one Kelvin to maintain the quantum state.

Research at the moment for Dzurak’s group is focused on characterising and understanding the two-qubit gate, rather than hurriedly upping the amount of one- and two-qubit gates working in combination.

What is it good for?

There’s genuine interest about the possible impact of quantum computing, both here and elsewhere. The federal government committed an extra $26 million to UNSW’s labs last November, with Telstra and the Commonwealth Bank each pledging $10 million in support soon after (the CBA had announced $5 million in support in 2014).

One reason for the interest is the approaching physical limits of how much can be crammed on an integrated circuit. The current resolution on an Intel chip of 14 nanometres can’t be slimmed down too much more, and with physical limitations come predicted limits to growth in computing muscle.

One of the molecular beam epitaxy units at UNSW.Though what would a quantum computer be good for, rather than just being hypothetically faster? One answer is finding an optimal solution for a problem with many variables interacting with each other.

“Where [there’s] a large number of possible combinations, you can make use of the fact that a quantum system can kind of run in parallel through all the possibilities,” Morello explained.

“Another, and probably the most interesting application is the one of simulating other quantum systems, such as molecules in drugs.

“If you want to understand the internal structure as well as the chemical reactions of a complex molecule, that is basically impossible to do on a normal computer because a molecule is a quantum object, whereas you can use a quantum computer as a form of hardware simulator for the quantum object that is the molecule.”

Two famous examples that sparked interest in quantum computing were Peter Shor’s algorithm for factoring large numbers, detailed in 1994, and Lov Grover’s algorithm from 1996 for database searches.

The former has huge implications for security, with Shor’s algorithm able to render RSA numbers (used for encrypting internet data transmissions) useless. Sampling is another area of interest, and one of three sets of applications (along with optimisation and machine learning) for which Canadian quantum computing company D-Wave advertises its technology.

It involves checking a computerised model against a sample of real-world information, testing how well the two align.

What areas interest CBA and Telstra? We can only guess. Telstra did not reply to queries and the bank referred to a press release from December citing only “speed and power”.

Would they be keen to use quantum computing in Monte Carlo modelling, for example? “We can’t comment on our future plans or make predictions or speculate,” was their emailed response.

What next?

There is a chance that a useful universal quantum computer might never appear.

One scenario is what University of New Mexico physicist Professor Ivan Deutsch calls a quantum computing nightmare, where “the exponential speed-up provided by a quantum computer would be cancelled out by the exponential complexity needed to protect the system from crashing.”

As for what approaches have the best shot, that depends on who you ask.

Morello said superconducting- and trapped ion-based qubit approaches are “unequivocally” leading the race at the moment. It’s unknown if this will continue.

“They will mostly likely be the ones who first get to some very small scaling implementation, but it’s not clear that they will be the ones who go all the distance to the big quantum computers,” he said.

Ion traps aren’t solid state and very delicate, as well as difficult to fabricate. The limitations for superconducting quantum bits are around size and scaling up, Morello said. This is not just in terms of footprint, but controlling the electromagnetic environment.

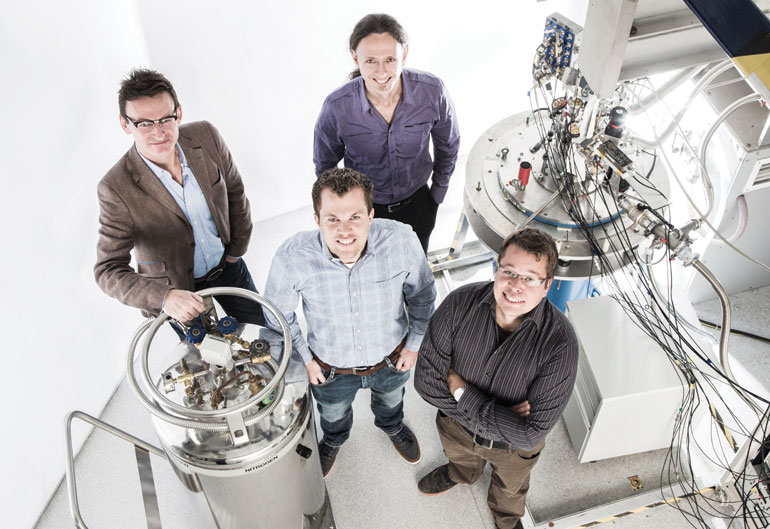

Researchers in the pristine environment of the Australian National Fabrication Facility.“It’s in a different context, but the reason why your computer is a quad core computer and not a single core computer is because if they made a single core computer, it would already be too big for the signal to propagate across fast enough. Right? Something similar will happen with superconducting qubits.

“Quite quickly, the chip will become too big for the signals to propagate controllably and fast enough from one side to the other.”

Two advantages of the UNSW silicon approaches are in size and manufacturability, with the idea being to leverage decades and trillions of dollars invested in semiconductor production.

Transferring the technology from the lab to the factory is one of the key next steps for Dzurak’s group.

In the short-term, manufacture would happen overseas (with a view to building capacity in Australia to create the electronics here in the longer term if possible).

The MOSFET-based electron qubits need to be able to be produced in a foundry, and with sufficiently high yields.

“One of the key engineering challenges is going to be moving our designs that we’ve developed here at UNSW into large-scale manufacturing processes,” said Dzurak.

“What I mean by yield is if you make 1000 of them, do all 1000 work?”

It’s a big step to get from the two- or three-qubit circuits that they are currently testing. It’s also realised that to do anything useful with a quantum computer, scale is going to be necessary. For example, the scientific journal Nature in 2014 reported ETH Zurich Professor of Computational Physics Matthias Troyer as saying that it would take “thousands” of qubits to do serious factorisation using Shor’s algorithm.

The race to create a quantum computer is on, but progress is tricky and cautious. For at least another five years, Dzurak reckons, the capabilities at ANFF will be sufficient to move to the 10- and 15-qubit level.

However, last year’s breakthrough was a massive challenge overcome. Years from now, it could be remembered as a turning point. Building a quantum computer starts with a qubit. In many ways, the one- and two-qubit breakthroughs are the big ones.

“The rest is effectively stringing together more bits, but still doing one qubit operations and two qubit operations,” Dzurak said.

“That is why that paper last year in October was such a significant breakthrough and got the attention in Nature that it did. It was really doing that two qubit logic that then says ‘right, we can now go all the way.’”