Can machine learning and collaboration help robots learn to build Lego models? Yotto Koga hopes so, and that the construction and manufacturing industries might benefit.

They’re often deployed to the dull, dirty and dangerous, but there’s one thing robots remain: dumb. Add inflexible, and they look a poor match for manufacturing in the future, which will have to satisfy consumers who want their goods customised — and want them yesterday.

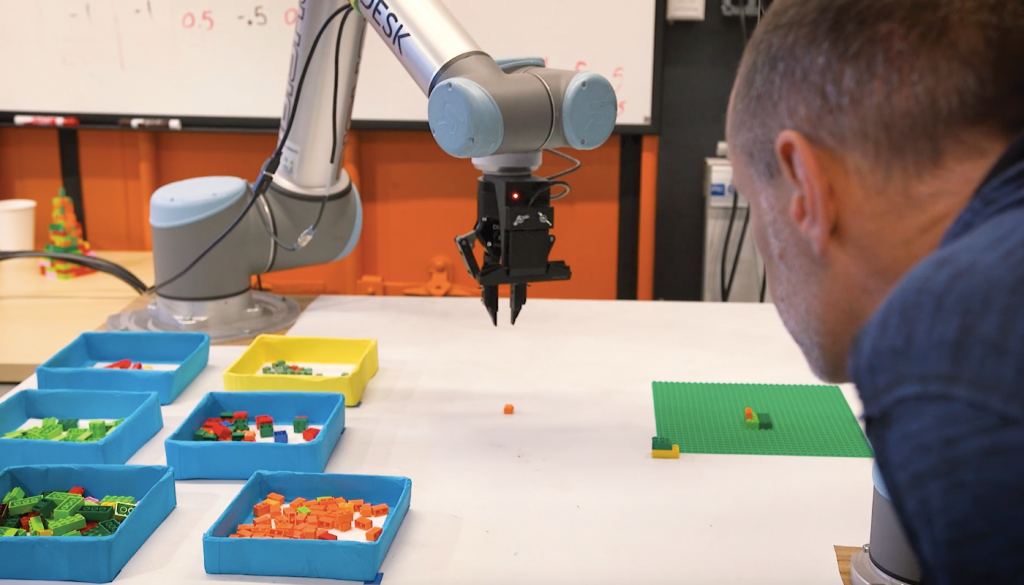

A project within design software company Autodesk is trying to make robots more capable — able to assemble things when given information on what to build rather than how to build it. Getting there is not easy, and the early steps in the project involve Lego.

“We wanted to see, has machine learning progressed enough where we can hopefully make robots easier to use and make them accessible to all of our customers?” the project’s lead, Dr Yotto Koga, told create.

“Working with Lego bricks in particular is kind of interesting; it’s fun, but also you can do some interesting assemblies without having to worry about things like fasteners or glue or welding. You can just kind of snap the pieces together and you can get some pretty interesting shapes.”

Koga is Software Architect at Autodesk’s AI Lab, and is based at the company’s Pier 9 centre in San Francisco. He has a PhD in robotics from Stanford University’s School of Engineering, and is currently the only full-timer on BrickBot, working with two others.

He said the project uses task-level approaches to robotics. Instead of programming at the nitty-gritty level, the project wanted to answer a question: “Could we have a system where you could command the robots at that higher level?”

“Pick up this part that we need for the assembly, re-grasp it if we need to get it into the right pose, use tools if you need to put those pieces together, and then ultimately place it into that final assembly,” he said.

The four task levels are broken into picking a part out of differently shaped and coloured bricks, reorienting the selected part — for example, if it is upside-down — tooling, and assembling. The assemblies to be built start out as CAD models, which the TinkerCAD program breaks into Lego pieces.

The system uses machine learning to get better at making things with Lego. Labelled datasets are generated, simulating what the camera sees, and adding sensor data such as where the robot’s two gripper fingers go when grasping parts. Manually putting the robots through their motions would take too long, because machine learning needs many examples.

“We try to do everything in simulation,” Koga said.

“Now that’s sort of the simulated to transfer learning column that we have working. It works pretty well. We do have some additional sensing going on so that when we detect failure, then we’ll go back and try again. And it seems through that sort of loop we get a pretty reliable system, mixing in the sort of machine-learned models along with getting it to work on the real robots.”

“Could we have a system where you could command the robots at that higher level?”

The project began in mid-2016, and Koga concedes there’s a long way to go before it is ready for the real world. The company plans to scale it up with partners in both the manufacturing and construction sectors.

“We’re also looking at the construction of simple shapes coming together without support structures, so we can again maybe use multiple robots to assist in the placement of the parts so that they can sort of self-support and create more complicated structures,” he said.

“There’s still quite a lot to do. But those are some of the steps that we’re taking, trying to get it ultimately into customer hands.

“We’re looking and working with a few customers to cherry-pick some real problems that we think aren’t too far a step from where we are [currently] with BrickBot.”

This article originally appeared as “Brick by brick” in the February 2019 edition of create magazine.

Comments 1